Steve Goldstein’s Amplifi Media works with media companies and podcasters in developing audio content strategies. Goldstein writes frequently at Blogstein, the Amplifi blog.

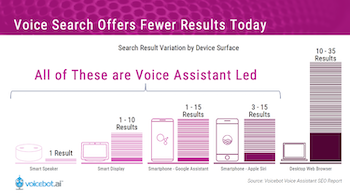

When people think of voice, most think smart speakers. In fact, more people use voice on mobile phones and in cars than smart speakers.

In 2018, there were 250 billion voice searches on mobile and other voice devices and that number is growing rapidly as people learn that asking is faster than typing. Voice search and verbal commands have become far more accurate than just a few years ago when many people were disenchanted with early iterations of services like Apple’s Siri.

We sat down with the leading voice on voice, Bret Kinsella, founder and CEO of Voicebot.ai to talk about where voice is going.

Among other things, we get into how podcasts can do better on smart speakers, why radio stations need skills and how to improve voice search.

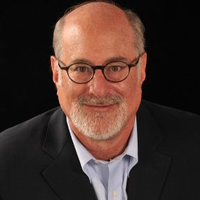

This is a pretty eye-opening graphic illustrating the number of voice devices active in the world.

Let’s start with how people are using smart speakers

Sure. Media of all sorts is performing better through voice platforms on the smart speaker than anything else. There are more users and there’s more frequency of use of streaming music services. Those have absolutely been successful on the platform. Radio is actually starting to do much better. It’s moved up a little bit in terms of usage over the last year. I think a lot of that has to do with radio stations not just being online but also promoting that they’re online. Overall, I think what we’re seeing is a bifurcated market. There are a few companies doing really well and almost everybody else is not.

What about skills (Alexa) and actions (Google) – how are they doing?

There are a couple of titles like Jeopardy for example, that are doing fine and there are some new ones that are coming out which I expect to do well; there’s another big game show coming out from a big global media company. But for the most part just a few are doing well. Everything else is just sort of low-grade growth. There are few that are perpetually up there in terms of top usage.

Overall, how are big brands doing with their smart speaker initiatives?

We did an assessment of 200 brands in 20 different categories last summer. I think it was about 22% had a Google action of some sort and 28% had an Alexa skill. The expectations have to be clear for a brand coming into the space. If you’re a media company like Meredith, you are providing an extension of the user experience. They rolled out Real Simple for example. For the most part, consumer goods companies are not doing much.

It feels like a lot of companies put something out to “check the box,” but haven’t moved the needle much.

Yeah. I think there’s some of that. But you also see brands like Nutella, which did a small initiative last year encouraging people to get samples via smart speakers. They came back this year and did two programs.

Let’s talk about flash briefings on the Alexa platform. How are they doing overall? I’m wondering if things have leveled off or decreased.

I haven’t seen any data that would convince me one way or the other. They do have a significant amount of usage. And there are a lot of people that have incorporated flash briefings into their daily routine.

How many flash briefings do you think people are listening to?

I’m guessing they’re doing no more than three. If your flash briefing is four or five or six on the list, my guess is they are not played very often.

Enabling of skills still seems to be an issue. I wonder why that hasn’t been resolved.

It’s a matter of the automated speech recognition when it comes down to it and how good it is. Some Flash Briefings and skills can automatically be enabled. Part of the problem is there are a lot more Alexa Skills than there were two years ago.

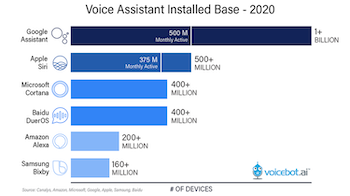

If you are searching for anything on a desktop screen or on a mobile phone, you get somewhere between 3 and 15 answers, but with voice search, and no screen, you get exactly one response.

Voice search works differently on each platform. For smart speakers (no screen), only one result is presented as opposed to 3 to 15 on platforms with screens.

That’s right. It’s complicated. You have to try to match the intent and then see whether Alexa is going to try to answer it from its own knowledge base or go to the search engine Bing or another third party like Yelp. It’s more likely to get confused because some invocation phrases can sound similar. As the platform grows, it becomes harder.

With typing and viewing for search, you can match something up. But for smart speakers, you have similar names and that’s a problem.

What can be done to improve search for a particular brand?

The easiest answer: you need to come up with something more unique or add a modifier to your invocation name.

I think about this in regard to titles of podcasts. Many have partially or fully duplicated names with other podcasts.

It’s really astounding to me. I just saw a new podcast being launched and I’m thinking there must be a podcast with that name already. Maybe they figured they could probably overwhelm the podcast market with a marketing budget.

Let’s shift beyond smart speakers and talk about voice search. There was that Comscore quote a few years ago that by this year, 50% of all search would be voice based.

That was never right. So first, if we think about voice search, most of it is not on smart speakers. We’re talking about maybe 250 billion queries in 2018 that were by voice out of two or three trillion total search queries of any kind. I don’t know what the voice search percent is for 2019– maybe 20% – but it’s hundreds of billions.

The use cases are different for various platforms. In the car on a mobile device is different than in the home. When we start talking about voice search, there are a couple of different things that I try to get people to think about. One is there’s a difference between general purpose use of a voice assistant to answer a question, versus product searches.

There are general search questions such as where is the Taj Mahal? How do I get to New York City? What’s the top selling ice cream? The assistants can all answer these. For brand names, it’s different. If the systems don’t know the answer, then Wikipedia is the default. In the early days of all of this, Google Assistant would serve an answer and essentially say “this might fulfill your need,” but the system for implicit invocations was actually fairly easy to game and resulted in wrong answers. So the engineers at Google are smart. They figured out that maybe that isn’t a great experience for the customer.

OK, explain it to me like I’m five years old, how does it work?

It’s basically a tree diagram. Once it figures out what you want, it starts to look at things that it believes are the highest likelihood to return an answer. And it keeps going through until it hits a confidence score that looks good. The algorithm is basically rigged to keep it in house – meaning inside Google or Alexa. Coronavirus queries are a good example of this. And then they usually look at these third party databases. It could be Yext or Yelp for instance. It could be the New York Stock Exchange, NASDAQ, or something like that. Then, the assistants may look at voice apps as an option.

It’s basically a tree diagram. Once it figures out what you want, it starts to look at things that it believes are the highest likelihood to return an answer. And it keeps going through until it hits a confidence score that looks good. The algorithm is basically rigged to keep it in house – meaning inside Google or Alexa. Coronavirus queries are a good example of this. And then they usually look at these third party databases. It could be Yext or Yelp for instance. It could be the New York Stock Exchange, NASDAQ, or something like that. Then, the assistants may look at voice apps as an option.

I see a lot of specialized assistants popping up like Erica from Bank of America.

Right. Charles Schwab just rolled one out two weeks ago, but nobody noticed because we’re in the midst of the coronavirus thing. Specialty assistants are about providing a better user experience and allowing people to get information faster. The ones most people are familiar with are in the car. They are designed for that environment.

At CES this year, back when one could go to conferences, Google and Amazon announced big initiatives to become the default voice integration for big car companies like GM and Ford.

Yes, the car is important. Today more voice systems are used in the car than smart speakers (see chart) and that’s before Google and Alexa come in. The car is heavily used today with the embedded assistant. It can control car functions which CarPlay and Android Auto can’t do. They generally have functionality that’s specific to the car that wouldn’t do you any good if you were outside the car like turning up the heat.

It depends whether it’s the mic from the phone or the mic from the car. Alexa, Soundhound and others are trying to replace the embedded system.

Is it inevitable that Google and Amazon systems will be the choice in most cars?

Is it inevitable that Google and Amazon systems will be the choice in most cars?

If consumers demand that those be available, automakers will provide them. If consumers do not or they don’t switch car brands because of the presence of those systems, the automakers will do as little as possible to encourage it.

Does voice search strategy for business require different thinking from text search SEO?

Just having a SEO strategy for text is not sufficient. You should have a SEO strategy for voice search. However, the things you need to be doing for text-based search don’t hurt you with voice-based search. And sometimes it helps, because the algorithms being used for voice assistants are still being built out. Who is Henry Ford and when was Ford motor company started – those fact-based questions very likely will be answered by Google’s traditional knowledge graph and Google assistant will get that answer. When it comes to brand and product queries the rules are different.

Some of the things that we know that work are making sure your Wikipedia page reflects what you want it to accurately. For a consumer goods company, your Google “My Business” details are important like open and close times. Canonical services either from Google or Yelp or Wikipedia – make sure those things are up to date. That’s good hygiene also for your web-based SEO.

Do skills or actions help?

Google Assistant and Amazon Alexa are going to be around for a while so you should have a Google action and an Alexa skill even if it’s just very basic information about you. Rankings matter. Presence matters.

Audio companies – many use the default TuneIn engine.

Well, for any audio company they absolutely should have a skill or action. If you have your own Alexa skill, the Amazon Alexa team will bias towards invoking your skill over TuneIn and other aggregators. That was not always the case. And make sure your own invocation is very simple.

Podcast listening on smart speakers remains frighteningly low.

So there are long term and near-term answers. The near-term issue is not just a discovery problem, there is a recognition problem. The system seems to have trouble identifying all of the podcasts with similar names. It’s an invocation problem that people are having. More important, in the near term, the listening experience is poor and that’s because the tools aren’t really there on Google and Amazon to make a robust interactive listening experience like they’ve done for music.

Thanks to Bret for a valuable overview. His Voicebot.ai site is a trove of studies, news and commentary. He has a great newsletter and yes, because he is an American, he has a podcast.

(Interview edited for brevity and clarity)